Too Slow: AI Adoption & The Future of Large Companies

ChatGPT was released almost 4 years ago and some large companies are still painfully working on deploying their first AI bot. On the other hand, younger companies, with AI mentality built-in by more adaptable founders, are cruising ahead.

The COO of a fast-growing FinTech startup told me that their new developer had built and deployed several features and tools within his first two weeks of employment. This company has a ‘live before complete’ policy with rapid iteration and feature development, something shared by corporations such as Anthropic who developed and released ‘Co-work’ in just 10 days.

This kind of speed is something many large companies can only dream of. Exploding with bureaucracy, these firms are slow to get anything meaningful in the hands of employees. As such, some workers are building AI tools with their own licences and API keys to ease their work.

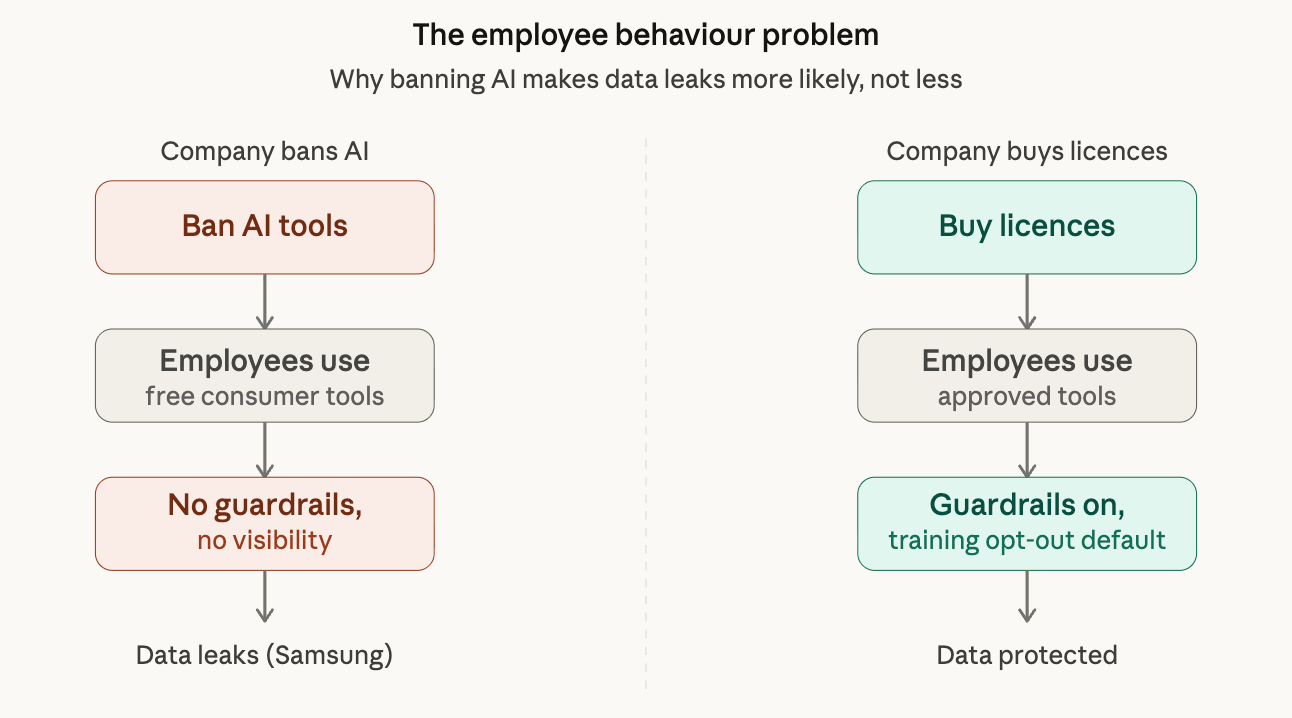

This mirrors what we saw when ChatGPT was first released. A lot of companies were blocking the chatbot on a firewall level to prevent its use. However, employees would just open the app on their phones. Companies should provide AI tools or expect that employees will find a way around their lame AI-preventions.

Whilst some companies are moving at record speeds, others are moving at a glacial pace. Startups can challenge incumbents by producing and iterating at rates that were impossible only a couple of years ago.

Security concerns

Security concerns surrounding AI are high (and valid, I may add, 45% of AI-generated code has security flaws). The key is to use AI as a companion by checking its results, not disregard it altogether. The risk of avoiding AI due to safety is that you will be the safest company to go out of business.

Companies can opt out of letting conversations being used to train models. This is a perfect example of where companies should be buying licences for ChatGPT/Claude, to avoid the issue of employees not opting out of data sharing.

The threat is employee behaviour. Samsung had an infamous case where engineers pasted chip design code into ChatGPT’s free tier. The solution isn’t to prevent AI use. That just pushes employees to use consumer tools with no protection. The solution is to purchase licences and add guardrails to prevent data leakage. There is a trade-off analysis that some large enterprises are seemingly unwilling or incapable of conducting.

My friend works for an S&P 500 technology company. Allow me to say that again, a technology company. Almost four years after ChatGPT’s release, upper management sent out a Google form asking employees about their AI usage so that they can inform tooling, government, and investment decisions. In 2026, a huge company doesn’t have visibility into how its own workforce is using AI. That is a disaster. Whilst small startups are scaling rapidly, some established firms are stuck in the mud, crowdsourcing basic awareness via Google Forms…

More surprisingly, upper management were scared of AI stealing data that was already public. My friend had created a couple of AI chatbots trained on data that can be downloaded by anyone from the company’s public site. This is ridiculous and here’s why. It is extremely likely that any public documentation from major corporations sitting on publicly accessible URLs has already been used in an LLM training database. Common Crawl, a repository of web crawl data that most LLMs are trained from, has probably crawled your free PDFs. OpenAI, Anthropic, and other AI companies have all crawled massive portions of the public web for training data as well. This lack of awareness of basic technology fundamentals will be the death of productivity in the AI era.

Many upper management personnel achieved their leadership position pre-AI. Their skills were not developed for the current state of the world. Some leaders have taken it upon themselves to up skill and have done so excellently. Many managers are driving their companies forward, making massive productivity gains. Some, however, have not been doing that. Rather, they have been quasi fear-mongering and stalling, perhaps due to their lack of understanding and worry of being replaced by more tech-savvy employees.

The world has changed. There is no going back. Management teams that don’t understand the new technology landscape will run their companies into the ground.

The future of development

Rather than writing code themselves, many top engineers are delegating that task to Claude Code and only reviewing the output. Around 50% of all Claude queries are for software engineering, suggesting where the industry may be heading. The head of Claude Code, Boris Cherny, admitted to not having written a single line of code since November 2025. He predicts that in one to two years, AI will be capable of writing and deploying all code. Seemingly a wild statement now; however, 4% of all GitHub commits are now made by AI. What was impossible only a year ago is now a reality.

Wait a minute. What about the costs? And you would be right to ask. Some Anthropic engineers are spending hundreds of thousands of dollars a month on tokens. This is more than many engineers’ yearly salaries. However, the £100-a-month Pro plan is extremely powerful and a worthwhile investment for many individuals and enterprises.

The future predictions of AI are just that. Predictions. However, we do know that it is changing and growing at an exponential rate. It has never been so accessible to launch a competitor with a small team in an established industry. Companies that are not switched on to the changing world of AI will find themselves falling behind.